离线安装KubeSphere

KubeKey 从 v2.1.0 版开始新增了清单 (manifest) 和制品 (artifact) 的概念,为用户离线部署 KubeSphere 和 K8s 集群提供了一种简单便捷的解决方案

- manifest 是一个描述当前 Kubernetes 集群信息和定义 artifact 制品中需要包含哪些内容的文本文件

- manifest 来导出制品 artifact 文件即可完成准备工作。离线部署时只需要 KubeKey 和 artifact 就可快速、简单的在环境中部署镜像仓库 Harbor 和 KubeSphere 以及 K8s 集群

离线部署资源制作

下载KubeKey和依赖包

下载 KubeKey

虚拟机存储空间最好设置的大一点,总共 80G 存储空间,centos-root 占了 50G,centos-home 就只剩 27G 了,在 /home 下操作,存储空间根本不够用

bash

bashcd /home mkdir kubekey cd kubekey/ export KKZONE=cn # 执行下载命令,获取最新版的 kk(受限于网络,有时需要执行多次) curl -sfL https://get-kk.kubesphere.io | sh - # 也可以使用下面的命令指定具体版本 curl -sfL https://get-kk.kubesphere.io | VERSION=v3.0.13 sh -获取操作系统依赖包

本实验环境使用的操作系统是 x64 的 CentOS 7.9,根据对应操作系统下载 iso 文件即可https://github.com/kubesphere/kubekey/releases

bashwget https://github.com/kubesphere/kubekey/releases/download/v3.0.12/centos7-rpms-amd64.iso

文件目录结构:

- kubekey-v3.0.13-linux-amd64.tar.gz

- kk

- centos7-rpms-amd64.iso生成配置manifest

vim ksp-v3.4.1-manifest.yaml编辑 ksp-v3.4.1-manifest.yaml

repository里的localPath需要改为centos7-rpms-amd64.iso存放位置最后的 17 个镜像是官方 images-list 文件缺失的镜像,一定要手工补充在 list 中

bashwget https://github.com/kubesphere/ks-installer/releases/download/v3.4.1/images-list.txt为了避免踩坑,使用如下配置即可

apiVersion: kubekey.kubesphere.io/v1alpha2

kind: Manifest

metadata:

name: sample

spec:

arches:

- amd64

operatingSystems:

- arch: amd64

type: linux

id: centos

version: "7"

repository:

iso:

localPath: /home/kubekey/centos7-rpms-amd64.iso

url:

kubernetesDistributions:

- type: kubernetes

version: v1.23.15

components:

helm:

version: v3.9.0

cni:

version: v1.2.0

etcd:

version: v3.4.13

calicoctl:

version: v3.23.2

containerRuntimes:

- type: docker

version: 20.10.8

- type: containerd

version: 1.6.4

crictl:

version: v1.24.0

harbor:

version: v2.5.3

docker-compose:

version: v2.2.2

docker-registry:

version: "2"

images:

- docker.io/kubesphere/ks-installer:v3.4.1

# - registry.cn-beijing.aliyuncs.com/kubesphereio/ks-installer:v3.4.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/ks-apiserver:v3.4.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/ks-console:v3.4.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/ks-controller-manager:v3.4.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/kubectl:v1.20.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/kubefed:v0.8.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/tower:v0.2.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/minio:RELEASE.2019-08-07T01-59-21Z

- registry.cn-beijing.aliyuncs.com/kubesphereio/mc:RELEASE.2019-08-07T23-14-43Z

- registry.cn-beijing.aliyuncs.com/kubesphereio/snapshot-controller:v4.0.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/nginx-ingress-controller:v1.3.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/defaultbackend-amd64:1.4

- registry.cn-beijing.aliyuncs.com/kubesphereio/metrics-server:v0.4.2

- docker.io/library/redis:5.0.14-alpine

#- registry.cn-beijing.aliyuncs.com/kubesphereio/redis:5.0.14-alpine

- registry.cn-beijing.aliyuncs.com/kubesphereio/haproxy:2.0.25-alpine

- registry.cn-beijing.aliyuncs.com/kubesphereio/alpine:3.14

- registry.cn-beijing.aliyuncs.com/kubesphereio/openldap:1.3.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/netshoot:v1.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/cloudcore:v1.13.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/iptables-manager:v1.13.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/edgeservice:v0.3.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/gatekeeper:v3.5.2

- registry.cn-beijing.aliyuncs.com/kubesphereio/openpitrix-jobs:v3.3.2

- registry.cn-beijing.aliyuncs.com/kubesphereio/devops-apiserver:ks-v3.4.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/devops-controller:ks-v3.4.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/devops-tools:ks-v3.4.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/ks-jenkins:v3.4.0-2.319.3-1

- registry.cn-beijing.aliyuncs.com/kubesphereio/inbound-agent:4.10-2

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-base:v3.2.2

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-nodejs:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-maven:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-maven:v3.2.1-jdk11

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-python:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-go:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-go:v3.2.2-1.16

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-go:v3.2.2-1.17

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-go:v3.2.2-1.18

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-base:v3.2.2-podman

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-nodejs:v3.2.0-podman

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-maven:v3.2.0-podman

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-maven:v3.2.1-jdk11-podman

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-python:v3.2.0-podman

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-go:v3.2.0-podman

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-go:v3.2.2-1.16-podman

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-go:v3.2.2-1.17-podman

- registry.cn-beijing.aliyuncs.com/kubesphereio/builder-go:v3.2.2-1.18-podman

- registry.cn-beijing.aliyuncs.com/kubesphereio/s2ioperator:v3.2.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/s2irun:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/s2i-binary:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/tomcat85-java11-centos7:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/tomcat85-java11-runtime:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/tomcat85-java8-centos7:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/tomcat85-java8-runtime:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/java-11-centos7:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/java-8-centos7:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/java-8-runtime:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/java-11-runtime:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/nodejs-8-centos7:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/nodejs-6-centos7:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/nodejs-4-centos7:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/python-36-centos7:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/python-35-centos7:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/python-34-centos7:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/python-27-centos7:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/argocd:v2.3.3

- registry.cn-beijing.aliyuncs.com/kubesphereio/argocd-applicationset:v0.4.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/dex:v2.30.2

- docker.io/library/redis:6.2.6-alpine

#- registry.cn-beijing.aliyuncs.com/kubesphereio/redis:6.2.6-alpine

- registry.cn-beijing.aliyuncs.com/kubesphereio/configmap-reload:v0.7.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/prometheus:v2.39.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/prometheus-config-reloader:v0.55.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/prometheus-operator:v0.55.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/kube-rbac-proxy:v0.11.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/kube-state-metrics:v2.6.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/node-exporter:v1.3.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/alertmanager:v0.23.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/thanos:v0.31.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/grafana:8.3.3

- registry.cn-beijing.aliyuncs.com/kubesphereio/kube-rbac-proxy:v0.11.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/notification-manager-operator:v2.3.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/notification-manager:v2.3.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/notification-tenant-sidecar:v3.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/elasticsearch-curator:v5.7.6

- registry.cn-beijing.aliyuncs.com/kubesphereio/opensearch-curator:v0.0.5

- registry.cn-beijing.aliyuncs.com/kubesphereio/elasticsearch-oss:6.8.22

- registry.cn-beijing.aliyuncs.com/kubesphereio/opensearch:2.6.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/opensearch-dashboards:2.6.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/fluentbit-operator:v0.14.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/docker:19.03

- registry.cn-beijing.aliyuncs.com/kubesphereio/fluent-bit:v1.9.4

- registry.cn-beijing.aliyuncs.com/kubesphereio/log-sidecar-injector:v1.2.0

- docker.elastic.co/beats/filebeat:6.7.0

#- registry.cn-beijing.aliyuncs.com/kubesphereio/filebeat:6.7.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/kube-events-operator:v0.6.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/kube-events-exporter:v0.6.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/kube-events-ruler:v0.6.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/kube-auditing-operator:v0.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/kube-auditing-webhook:v0.2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/pilot:1.14.6

- registry.cn-beijing.aliyuncs.com/kubesphereio/proxyv2:1.14.6

- registry.cn-beijing.aliyuncs.com/kubesphereio/jaeger-operator:1.29

- registry.cn-beijing.aliyuncs.com/kubesphereio/jaeger-agent:1.29

- registry.cn-beijing.aliyuncs.com/kubesphereio/jaeger-collector:1.29

- registry.cn-beijing.aliyuncs.com/kubesphereio/jaeger-query:1.29

- registry.cn-beijing.aliyuncs.com/kubesphereio/jaeger-es-index-cleaner:1.29

- registry.cn-beijing.aliyuncs.com/kubesphereio/kiali-operator:v1.50.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/kiali:v1.50

- registry.cn-beijing.aliyuncs.com/kubesphereio/busybox:1.31.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/nginx:1.14-alpine

- registry.cn-beijing.aliyuncs.com/kubesphereio/wget:1.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/hello:plain-text

- docker.io/library/wordpress:4.8-apache

#- registry.cn-beijing.aliyuncs.com/kubesphereio/wordpress:4.8-apache

- registry.cn-beijing.aliyuncs.com/kubesphereio/hpa-example:latest

- registry.cn-beijing.aliyuncs.com/kubesphereio/fluentd:v1.4.2-2.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/perl:latest

- docker.io/istio/examples-bookinfo-productpage-v1:1.16.2

# - registry.cn-beijing.aliyuncs.com/kubesphereio/examples-bookinfo-productpage-v1:1.16.2

- registry.cn-beijing.aliyuncs.com/kubesphereio/examples-bookinfo-reviews-v1:1.16.2

- registry.cn-beijing.aliyuncs.com/kubesphereio/examples-bookinfo-reviews-v2:1.16.2

- docker.io/istio/examples-bookinfo-details-v1:1.16.2

# - registry.cn-beijing.aliyuncs.com/kubesphereio/examples-bookinfo-details-v1:1.16.2

- docker.io/istio/examples-bookinfo-ratings-v1:1.16.3

# - registry.cn-beijing.aliyuncs.com/kubesphereio/examples-bookinfo-ratings-v1:1.16.3

- registry.cn-beijing.aliyuncs.com/kubesphereio/scope:1.13.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/pause:3.8

- registry.cn-beijing.aliyuncs.com/kubesphereio/pause:3.9

- registry.cn-beijing.aliyuncs.com/kubesphereio/kube-apiserver:v1.26.5

- registry.cn-beijing.aliyuncs.com/kubesphereio/kube-controller-manager:v1.26.5

- registry.cn-beijing.aliyuncs.com/kubesphereio/kube-scheduler:v1.26.5

- registry.cn-beijing.aliyuncs.com/kubesphereio/kube-proxy:v1.26.5

- registry.cn-beijing.aliyuncs.com/kubesphereio/k8s-dns-node-cache:1.15.12

- registry.cn-beijing.aliyuncs.com/kubesphereio/coredns:1.9.3

- registry.cn-beijing.aliyuncs.com/kubesphereio/kube-controllers:v3.26.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/cni:v3.26.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/node:v3.26.1

- registry.cn-beijing.aliyuncs.com/kubesphereio/pod2daemon-flexvol:v3.26.1

- docker.io/library/haproxy:2.3

# - registry.cn-beijing.aliyuncs.com/kubesphereio/haproxy:2.3

- registry.cn-beijing.aliyuncs.com/kubesphereio/provisioner-localpv:3.3.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/linux-utils:3.3.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/kubectl:v1.22.0

- registry.cn-beijing.aliyuncs.com/kubesphereio/kubectl:v1.21.0

registry:

auths: {}导出artifact

导出制品artifact

- 制品(artifact)是一个根据指定的 manifest 文件内容导出的包含镜像 tar 包和相关二进制文件的 tgz 包

- 在 KubeKey 初始化镜像仓库、创建集群、添加节点和升级集群的命令中均可指定一个 artifact,KubeKey 将自动解包该 artifact 并在执行命令时直接使用解包出来的文件

export KKZONE=cn

./kk artifact export -m ksp-v3.4.1-manifest.yaml -o ksp-v3.4.1-artifact.tar.gz

导致制品问题

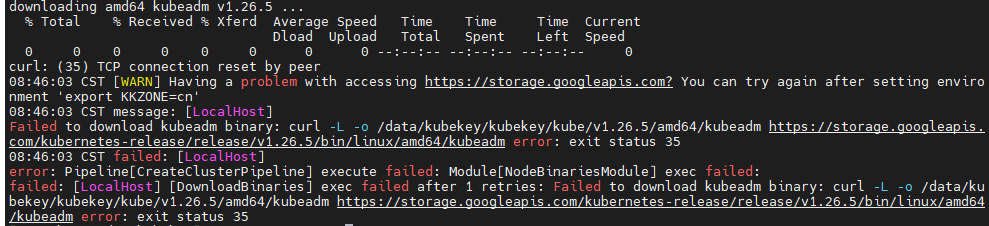

问题1:网络问题导致配置下载不下来

bash...read: connection reset by peer CST failed: [LocalHost] error: Pipeline[ArtifactExportPipeline] execute failed: Module[CopyImagesToLocalModule] exec failed: failed: [LocalHost] [SaveImages] exec failed after 1 retries: creating an updated image manifest: ...

解决方案:将 aliyun 镜像改为 docker.io 官方镜像即可。个别 io 还需要搜索一些具体地址

示例:

docker.io/kubesphere/ks-installer:v3.4.1替换为registry.cn-beijing.aliyuncs.com/kubesphereio/ks-installer:v3.4.1问题2:没有环境变量

- 解决方案:执

export KKZONE=cn即可

上传离线包(部署从这开始)

将以下离线部署资源包(KubeKey 和制品 artifact ),上传至离线环境部署节点的目录(示例为 /data,实际按需求进行选择),创建 kubekey 文件夹

- Kubekey:kubekey-v3.0.13.tar.gz

- 制品 artifact:ksp-v3.4.1-artifact.tar.gz

mkdir /data/kubekey

目录结构如下:

解压 KubeKey

cd /data

mv ksp-v3.4.1-artifact.tar.gz kubekey

tar -zxvf kubekey-v3.0.13.tar.gz -C kubekey

cd /data/kubekey创建离线集群配置文件

./kk create config --with-kubesphere v3.4.1 --with-kubernetes v1.26.5 -f offline.yaml

初始化k8s集群

修改集群配置

修改 offline.yaml

- 修改 hosts,修改指定节点 IP、ssh 用户、ssh 密码、ssh 端口

- 修改 roleGroups,指定 etcd、control-plane 节点

添加服务器域名设置

bash$ vi /etc/hosts 192.168.220.139 k8s-master 192.168.220.140 k8s-node1 192.168.220.141 k8s-node2设置服务器免密登陆

bashssh-keygen -t rsa ssh-copy-id k8s-node1 ssh-copy-id k8s-node2这样下面集群之间打招呼会非常快,不至于超时,也就可以不设置 timeout 超时时间了

修改集群信息

spec:

hosts:

- {name: k8s-master, address: 192.168.220.139, internalAddress: 192.168.220.139, user: root, password: "admin", timeout: 120}

- {name: k8s-node1, address: 192.168.220.140, internalAddress: 192.168.220.140, user: root, password: "admin", timeout: 120}

- {name: k8s-node2, address: 192.168.220.141, internalAddress: 192.168.220.141, user: root, password: "admin", timeout: 120}

roleGroups:

etcd:

- k8s-master

control-plane:

- k8s-master

worker:

- k8s-node1

- k8s-node2

registry:

- k8s-master

storage:

openebs:

basePath: /data/openebs/local

registry:

type: harbor

auths:

"registry.opsman.top":

username: admin

password: Harbor12345

certsPath: "/etc/docker/certs.d/registry.opsman.top"

privateRegistry: "registry.opsman.top"

namespaceOverride: "kubesphereio"

registryMirrors: []

insecureRegistries: []

addons: []修改集群功能配置

spec:

namespace_override: kubesphereio

# 启用 Etcd 监控

etcd:

monitoring: true

# 启用 KubeSphere 告警系统

alerting:

enabled: true

# 启用 KubeSphere 审计日志

auditing:

enabled: true

# 启用 KubeSphere DevOps 系统

devops:

enabled: true

# 启用 KubeSphere 事件系统

events:

enabled: true

# 启用 KubeSphere 日志系统

logging:

enabled: true

# 启用 Metrics Server

metrics_server:

enabled: true

# 启用网络策略、容器组 IP 池,服务拓扑图(名字排序,对应配置参数排序)

network:

networkpolicy:

enabled: true

ippool:

type: calico

topology:

type: weave-scope

# 启用应用商店

openpitrix:

store:

enabled: true

# 启用 KubeSphere 服务网格(Istio)

servicemesh:

enabled: true完整集群配置(可直接替换)

apiVersion: kubekey.kubesphere.io/v1alpha2

kind: Cluster

metadata:

name: sample

spec:

hosts:

- {name: k8s-master, address: 192.168.220.139, internalAddress: 192.168.220.139, user: root, password: "admin", timeout: 120}

- {name: k8s-node1, address: 192.168.220.140, internalAddress: 192.168.220.140, user: root, password: "admin", timeout: 120}

- {name: k8s-node2, address: 192.168.220.141, internalAddress: 192.168.220.141, user: root, password: "admin", timeout: 120}

roleGroups:

etcd:

- k8s-master

control-plane:

- k8s-master

worker:

- k8s-node1

- k8s-node2

registry:

- k8s-master

controlPlaneEndpoint:

## Internal loadbalancer for apiservers

# internalLoadbalancer: haproxy

domain: lb.kubesphere.local

address: ""

port: 6443

kubernetes:

version: v1.26.5

clusterName: cluster.local

autoRenewCerts: true

containerManager: containerd

etcd:

type: kubekey

network:

plugin: calico

kubePodsCIDR: 10.233.64.0/18

kubeServiceCIDR: 10.233.0.0/18

## multus support. https://github.com/k8snetworkplumbingwg/multus-cni

multusCNI:

enabled: false

storage:

openebs:

basePath: /data/openebs/local

registry:

type: harbor

auths:

"registry.opsman.top":

username: admin

password: Harbor12345

certsPath: "/etc/docker/certs.d/registry.opsman.top"

privateRegistry: "registry.opsman.top"

namespaceOverride: "kubesphereio"

registryMirrors: []

insecureRegistries: []

addons: []

---

apiVersion: installer.kubesphere.io/v1alpha1

kind: ClusterConfiguration

metadata:

name: ks-installer

namespace: kubesphere-system

labels:

version: v3.4.1

spec:

namespace_override: kubesphereio

persistence:

storageClass: ""

authentication:

jwtSecret: ""

local_registry: ""

# dev_tag: ""

# 启用 Etcd 监控

etcd:

monitoring: true

endpointIps: localhost

port: 2379

tlsEnable: true

common:

core:

console:

enableMultiLogin: true

port: 30880

type: NodePort

# apiserver:

# resources: {}

# controllerManager:

# resources: {}

redis:

enabled: false

enableHA: false

volumeSize: 2Gi

openldap:

enabled: false

volumeSize: 2Gi

minio:

volumeSize: 20Gi

monitoring:

# type: external

endpoint: http://prometheus-operated.kubesphere-monitoring-system.svc:9090

GPUMonitoring:

enabled: false

gpu:

kinds:

- resourceName: "nvidia.com/gpu"

resourceType: "GPU"

default: true

es:

# master:

# volumeSize: 4Gi

# replicas: 1

# resources: {}

# data:

# volumeSize: 20Gi

# replicas: 1

# resources: {}

enabled: false

logMaxAge: 7

elkPrefix: logstash

basicAuth:

enabled: false

username: ""

password: ""

externalElasticsearchHost: ""

externalElasticsearchPort: ""

opensearch:

# master:

# volumeSize: 4Gi

# replicas: 1

# resources: {}

# data:

# volumeSize: 20Gi

# replicas: 1

# resources: {}

enabled: true

logMaxAge: 7

opensearchPrefix: whizard

basicAuth:

enabled: true

username: "admin"

password: "admin"

externalOpensearchHost: ""

externalOpensearchPort: ""

dashboard:

enabled: false

# 启用 KubeSphere 告警系统

alerting:

enabled: true

# thanosruler:

# replicas: 1

# resources: {}

# 启用 KubeSphere 审计日志

auditing:

enabled: true

# operator:

# resources: {}

# webhook:

# resources: {}

# 启用 KubeSphere DevOps 系统

devops:

enabled: true

jenkinsCpuReq: 0.5

jenkinsCpuLim: 1

jenkinsMemoryReq: 4Gi

jenkinsMemoryLim: 4Gi

jenkinsVolumeSize: 16Gi

# 启用 KubeSphere 事件系统

events:

enabled: true

# operator:

# resources: {}

# exporter:

# resources: {}

ruler:

enabled: true

replicas: 2

# resources: {}

# 启用 KubeSphere 日志系统

logging:

enabled: true

logsidecar:

enabled: true

replicas: 2

# resources: {}

# 启用 Metrics Server

metrics_server:

enabled: true

monitoring:

storageClass: ""

node_exporter:

port: 9100

# resources: {}

# kube_rbac_proxy:

# resources: {}

# kube_state_metrics:

# resources: {}

# prometheus:

# replicas: 1

# volumeSize: 20Gi

# resources: {}

# operator:

# resources: {}

# alertmanager:

# replicas: 1

# resources: {}

# notification_manager:

# resources: {}

# operator:

# resources: {}

# proxy:

# resources: {}

gpu:

nvidia_dcgm_exporter:

enabled: false

# resources: {}

multicluster:

clusterRole: none

# 启用网络策略、容器组 IP 池,服务拓扑图(名字排序,对应配置参数排序)

network:

networkpolicy:

enabled: true

ippool:

type: calico

topology:

type: weave-scope

# 启用应用商店

openpitrix:

store:

enabled: true

# 启用 KubeSphere 服务网格(Istio)

servicemesh:

enabled: true

istio:

components:

ingressGateways:

- name: istio-ingressgateway

enabled: false

cni:

enabled: false

edgeruntime:

enabled: false

kubeedge:

enabled: false

cloudCore:

cloudHub:

advertiseAddress:

- ""

service:

cloudhubNodePort: "30000"

cloudhubQuicNodePort: "30001"

cloudhubHttpsNodePort: "30002"

cloudstreamNodePort: "30003"

tunnelNodePort: "30004"

# resources: {}

# hostNetWork: false

iptables-manager:

enabled: true

mode: "external"

# resources: {}

# edgeService:

# resources: {}

gatekeeper:

enabled: false

# controller_manager:

# resources: {}

# audit:

# resources: {}

terminal:

timeout: 600安装Harbor并推送镜像

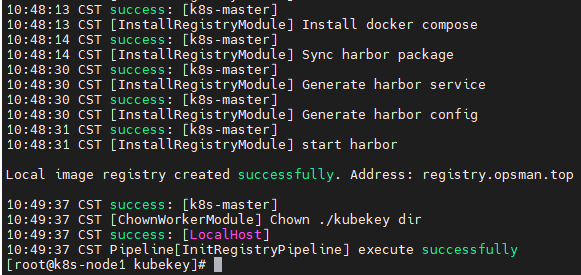

安装Harbor

安装命令如下:

cd /data/kubekey

./kk init registry -f offline.yaml -a ksp-v3.4.1-artifact.tar.gz安装成功提示如下信息:

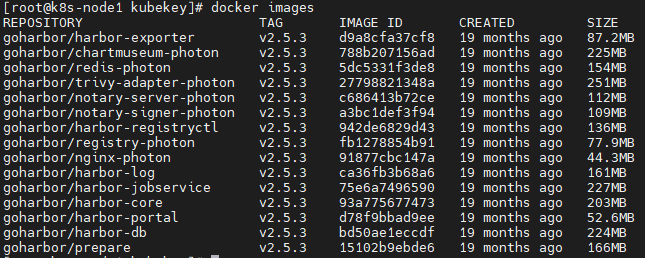

查看安装版本

docker images

安装Harbor问题

问题1:ssh 建立连接到时间比较长

basherror: Pipeline[CreateClusterPipeline] execute failed: Module[GreetingsModule] exec failed: failed: [k8s-node1] execute task timeout, Timeout=1m30s

- 解决方案:hosts 里的 timeout 设置大一点即可

timeout: 120。推荐还是设置服务器之间免密登陆问题2:安装时提示

no space left on device,没有多余空间

- 解决方案:虚拟机要给足存储空间,扩容可以参考:VMware设置CentOS7系统磁盘扩容

问题3:证书验证失败

解决方案:修改为如下配置即可

yamlregistry: type: harbor auths: "registry.opsman.top": username: admin password: Harbor12345 certsPath: "/etc/docker/certs.d/registry.opsman.top" privateRegistry: "registry.opsman.top" namespaceOverride: "kubesphereio" registryMirrors: [] insecureRegistries: []

在Harbor中创建项目

添加脚本配置

vim create_project_harbor.sh

chmod 755 create_project_harbor.sh添加如下配置

#!/usr/bin/env bash

# default: https://dockerhub.kubekey.local

url="https://registry.opsman.top"

# 访问 Harbor 仓库的默认用户和密码(生产环境建议修改)

user="admin"

passwd="Harbor12345"

harbor_projects=(library

kubesphere

calico

coredns

openebs

csiplugin

minio

mirrorgooglecontainers

osixia

prom

thanosio

jimmidyson

grafana

elastic

istio

jaegertracing

jenkins

weaveworks

openpitrix

joosthofman

nginxdemos

fluent

kubeedge

kubesphereio

)

for project in "${harbor_projects[@]}"; do

echo "creating $project"

curl -k -u "${user}:${passwd}" -X POST -H "Content-Type: application/json" "${url}/api/v2.0/projects" -d "{ \"project_name\": \"${project}\", \"public\": true}"

done执行脚本创建项目

./create_project_harbor.sh

推送离线镜像

./kk artifact image push -f offline.yaml -a ksp-v3.4.1-artifact.tar.gz

registry:

- k8s-master镜像仓库为 k8s-master,推送完毕即可进入 Harbor 仓库。地址为:https://ip。我的 k8s-master ip 为 https://192.168.220.139/

- 账号:admin

- 密码:Harbor12345

可以看到镜像已经推送上去了

安装KubeSphere和K8s集群

安装KubeSphere

- --with-packages:如需要安装操作系统依赖,需指定该选项

- --skip-push-images: 忽略推送镜像,因为,前面已经完成了推送镜像到私有仓库的任务

export KKZONE=cn

./kk create cluster -f offline.yaml -a ksp-v3.4.1-artifact.tar.gz --with-packages --skip-push-images首先 KubeKey 会检查部署 K8s 的依赖及其他详细要求。检查合格后,系统将提示您确认安装。输入 yes 并按 ENTER 继续部署

k8s 控制面板初始化成功

看到有进度条在来回跑,就看到了胜利的曙光,等待即可

- 如果长时间不成功,查

kubectl get po -A有好几个处于 PENDING 或失败状态,这时候需要查一下原因(最坑的原因可能是 centos 版本不符合)

查看日志

- 如果没有报错,恭喜!!!

kubectl logs -n kubesphere-system $(kubectl get pod -n kubesphere-system -l app=ks-installer -o jsonpath='{.items[0].metadata.name}') -f查看节点状态

kubectl get nodes

登录 Web 控制台通过 http://{IP}:30880

- 示例:http://192.168.220.139:30880

- 帐户: admin

- 密码:P@88w0rd

安装KubeSphere问题

问题1:别忘记添加环境变量

- 解决方案:执行

export KKZONE=cn(没必要永久添加)问题2:IP被动态修改了

bashThe connection to the server lb.kubesphere.local:6443 was refused - did you specify the right host or port? 14:02:55 CST message: [k8s-master] Failed to set some node status fields" err="failed to validate nodeIP: node IP: \"192.168.220.139\

解决方案:绑定静态IP

- 如果没法使用 netstat 命令,可以

yum -y install net-toolsbash$ systemctl status kubelet # 发现启动报错了 $ netstat -ntlp | grep 6443 # 什么都没有 $ vi /etc/sysconfig/network-scripts/ifcfg-ens33 BOOTPROTO=static ONBOOT=yes IPADDR=192.168.220.139 GATEWAY=192.168.220.2 NETMASK=255.255.255.0 DNS1=114.114.114.114 $ systemctl restart network类似其他情况可参考:如何排查解决:The connection to the server: 6443 was refused - did you specify the right host or port

问题3:可能是网络原因问题

- 解决方案:重新执行安装步骤即可

问题4:节点异常

bashIPVS: RR UDP 10.133.0.3 53 no destination available

解决方案:输入命令

dmesg -n 1并执行bashdmesg -n 1问题5:卡在

Please wait for the installation to completebashPlease wait for the installation to complete: >>—> 09:30:26 UTC failed: [master] error: Pipeline[CreateClusterPipeline] execute failed: Module[CheckResultModule] exec failed: failed: [master] execute task timeout, Timeout=7200000000000

网上解决方案:包含了不可调度的污点(无法解决)

bash$ kubectl get po -A | grep ks-install kubesphere-system ks-installer-dd9c4df6c-ff9dw 0/1 Pending 0 9d $ kubectl describe po ks-installer-dd9c4df6c-ff9dw -n kubesphere-system Warning FailedScheduling 8m11s default-scheduler 0/3 nodes are available: 1 node(s) had untolerated taint {node-role.kubernetes.io/control-plane: }, 2 node(s) had untolerated taint {node.kubernetes.io/not-ready: }. preemption: 0/3 nodes are available: 3 Preemption is not helpful for scheduling.. $ kubectl taint nodes --all node-role.kubernetes.io/master- taint "node-role.kubernetes.io/master" not found taint "node-role.kubernetes.io/master" not found taint "node-role.kubernetes.io/master" not found实际问题:和 centos 版本有关系,centos 版本和 kubesphere 版本不兼容,需要安装合适的版本

我用的是

CentOS-7-x86_64-DVD-1804.iso,新下载的是CentOS-7-x86_64-DVD-2009.iso

- 关闭防火墙

bashsystemctl stop firewalld systemctl disable firewalld

- 关闭 selinux

bash# 暂时关闭,不用重启 setenforce 0 # 永久关闭,需要重启机器。将SELINUX=enforcing改为SELINUX=disabled vim /etc/selinux/config

- 关闭 swap

bash# 临时关闭swap swapoff -a # 永久关闭swap分区 sed -ri 's/.*swap.*/#&/' /etc/fstab问题6:https 握手超时

bash$ kubectl logs -n kubesphere-system $(kubectl get pod -n kubesphere-system -l app=ks-installer -o jsonpath='{.items[0].metadata.name}') -f Error from server: Get "https://192.168.220.140:10250/containerLogs/kubesphere-system/ks-installer-dd9c4df6c-75jr8/installer?follow=true": net/http: TLS handshake timeout

网上解决方案:在 master 节点上执行(未解决)

bashkubectl apply -f https://docs.projectcalico.org/manifests/calico.yaml --request-timeout='0'问题7:无法登陆

bashrequest to http://ks-apiserver/oauth/token failed, reason: connect ECONNREFUSED 10.233.51.80:80

网上解决方案:重启虚拟机(未解决)

bashkubectl get pod -n kubesphere-system查看之后发现容器正在建立中...